Work the Wobble: Drawing with Line-us

This spring I took a small wobbly drawing robot to a cabin in the redwoods, to reflect and find out what kind of art we could make together.

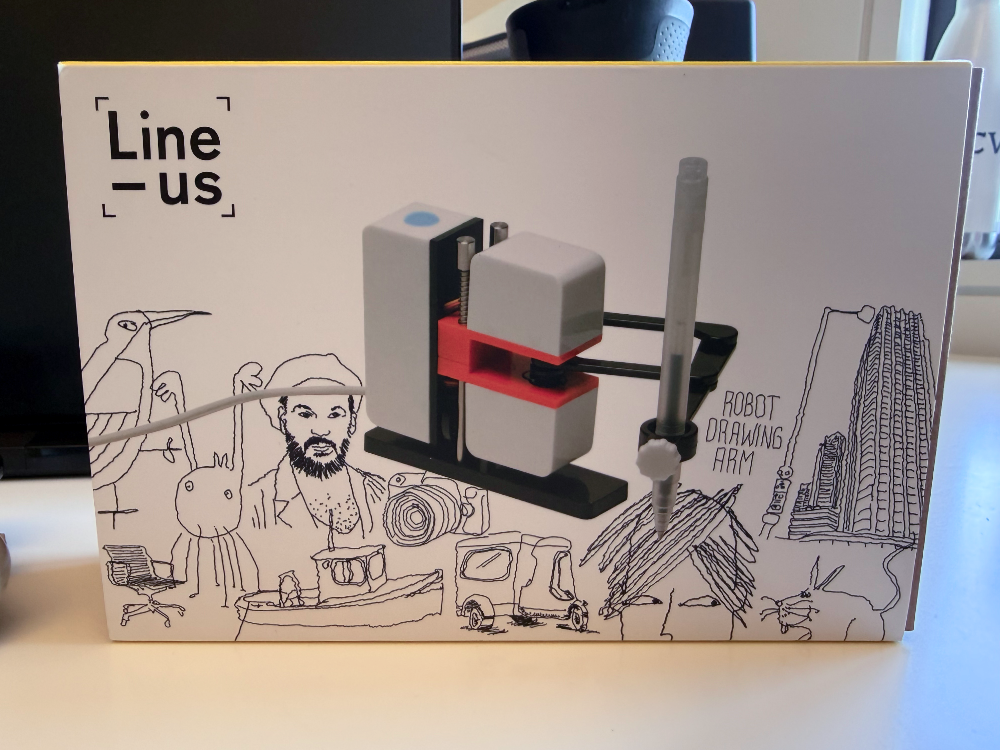

Line-us is a portable, computer-connected drawing arm with a characteristically wobbly output. The hyphen does double duty — "Line + us" (online by design, share drawings with the community) and "Linus" as a proper name. I'll use it as a proper name. After recently finishing Carl Lostritto's Computational Drawing, I wanted to spend some quality time with this little machine and, paraphrasing Lostritto, work through what it means to make a drawing with a computer and compute in the space of drawing.

I'm a fan of styles popular on sites like Generative Hut — tight geometry, clean pen plotter output. The grown-up version of the Logo turtle graphics I drew on an Apple II as a kid. So that's what I tried first. I wrote some code to send those drawings to Line-us, and ran a bunch of examples through it.

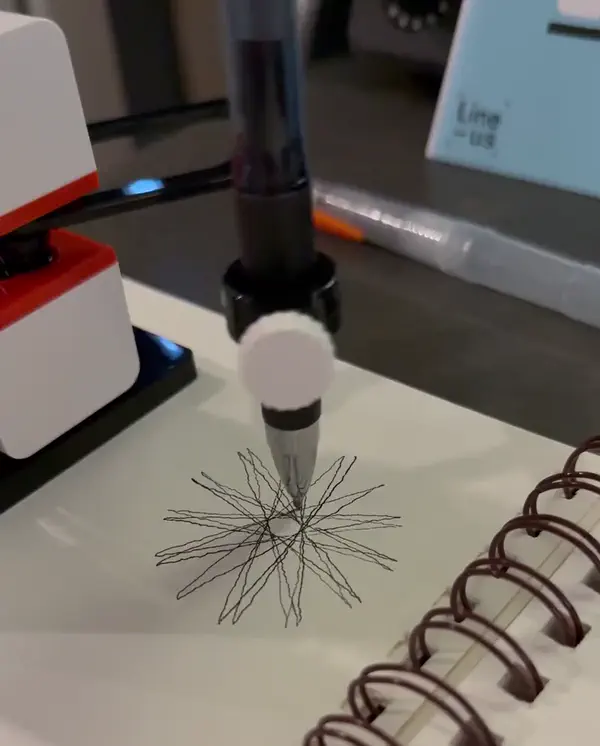

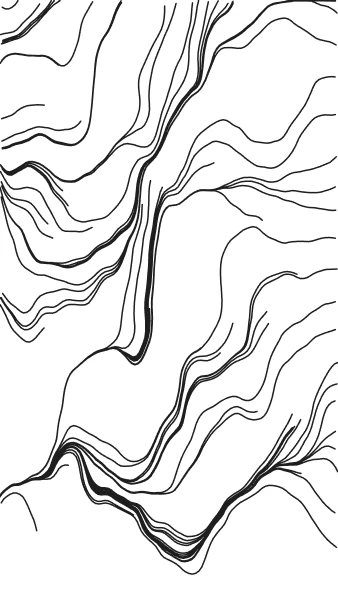

Line-us doesn't really cooperate with precision though. Sharp corners overshoot. Small straight lines aren't very straight. The results looked like a wobbly robot poorly attempting someone else's clean plotter aesthetic. So I set myself a challenge: find a subject that cooperates with the wobble. The figurative work people make with Line-us is just perfect, but I wanted to stay in the generative and algorithmic space. Work with the imprecision. Embrace it. Make it a feature, not a bug.

Redwoods and Fault Lines

For years I've taken short art and music retreats — focused time, usually far away, into the woods or by the ocean. This time I headed up into the redwoods near Soquel. Go hiking, find inspiration, get the creative juices flowing, that was the plan. While there, I was thinking about what's distinctively California. Moving through the redwoods and over the Loma Prieta earthquake fault line, I remembered the 1989 quake — the one that interrupted the World Series and collapsed the Cypress Freeway in Oakland. Its epicenter sits right under that forest.

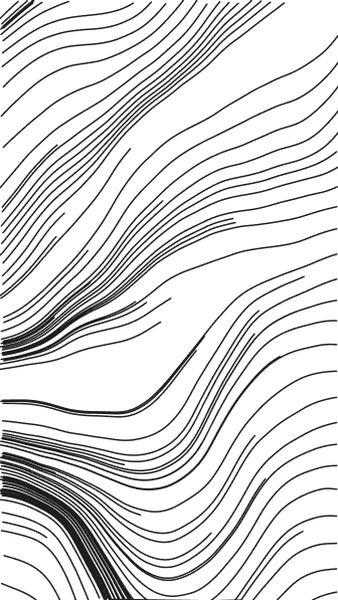

Faults are lines, roots are lines, but none of those lines are straight. No precision geometry. But the natural algorithms in play around me seemed harmonious and beautiful. The branches of the trees. The ripples in the water. These are what I wanted to draw.

roots grip the cliff face

not knowing which way is down

they try every way

The Haiku Loop

Lately I've been experimenting with AI-assisted creative coding; see Voice-Coding Synthesizer Fail (2024), Dots Study (2025). The models weren't quite there yet for these. This year, they got a lot better.

Now on to drawing. First pass was Claude Code's algorithmic art skill — an interesting study in prompt engineering. The skill writes a manifesto-style philosophy essay, then expresses the philosophy as a sketch in p5.js (a friendly tool for making art with code). Some of the bombastic register is prescribed by the skill's prompt, not discovered, which is worth knowing before you take any of its output seriously.

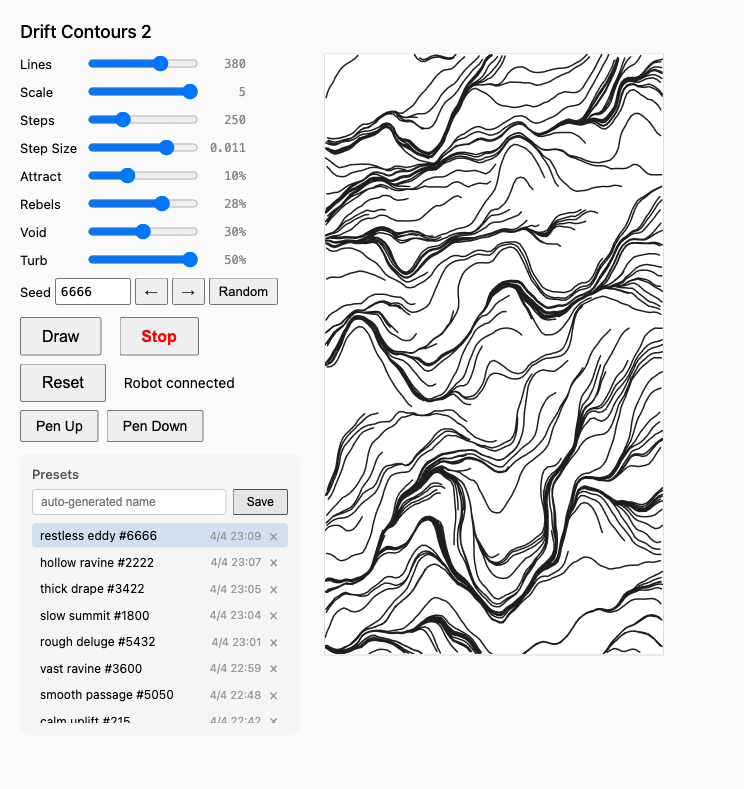

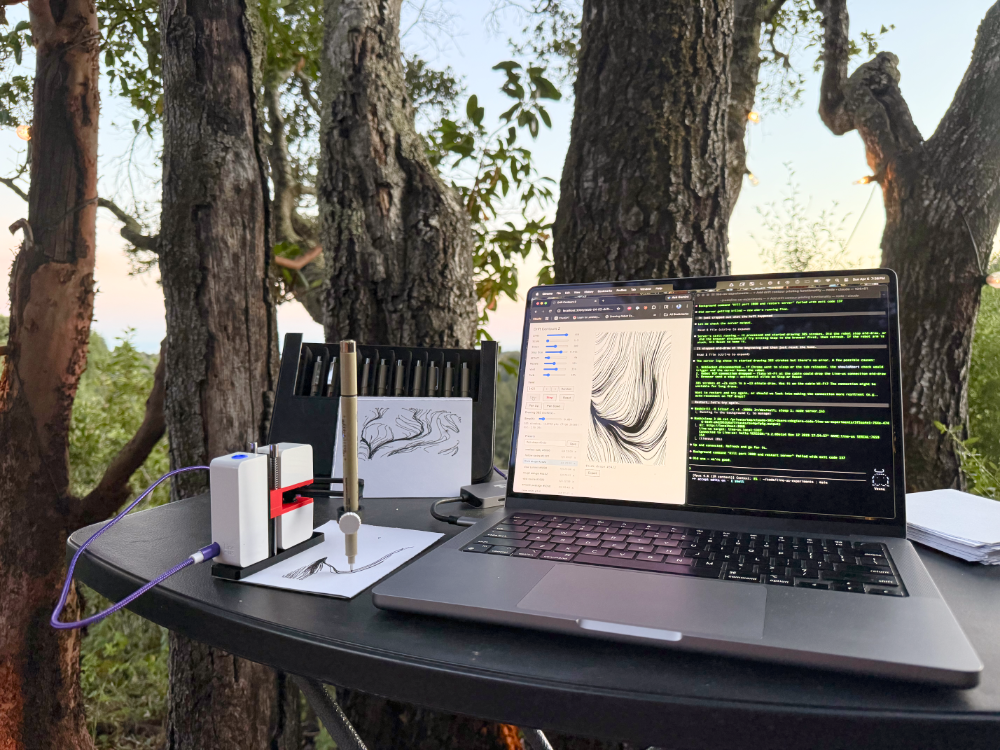

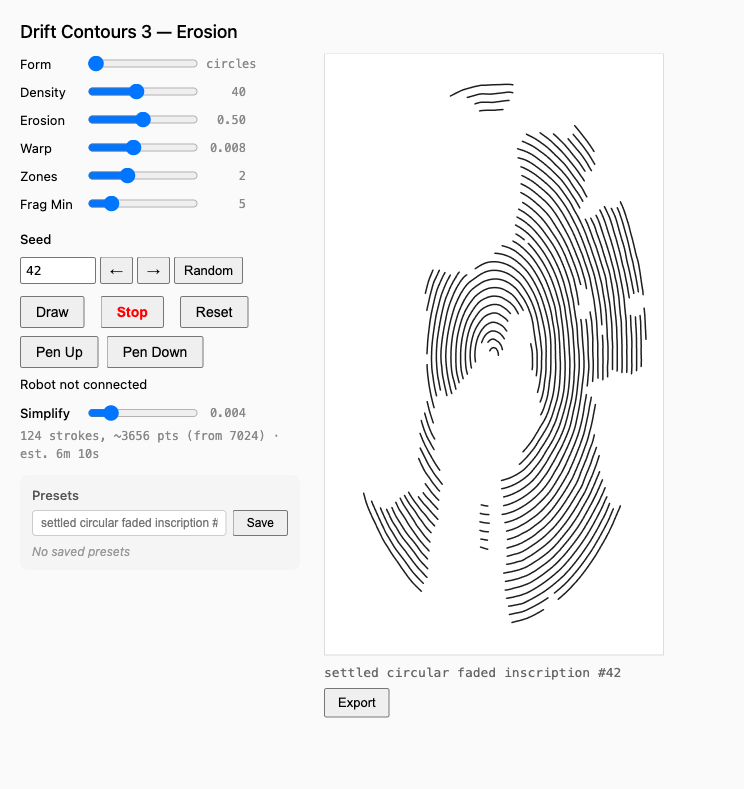

I added my own layer around the skill and the Line-us open-source project. The job: get a browser drawing into the arm in a language it can follow. A small Node bridge translates p5.js sketches into G-code over TCP, with a bit of polyline simplification server-side so the arm draws cleaner curves. This helped a lot. On top of that, a visual interface (shown): parameter sliders, a preset library, seeds for reproducibility, and randomization. Essentially a testbed for steering a canned base algorithm toward an aesthetic I have in my head.

What came back first was basic flow-field work. Not a grand piece yet. Flow fields are well-trodden territory — from Ken Perlin's noise function (1983) to Dan Shiffman's Nature of Code (orig. 2012). Tyler Hobbs wrote an excellent primer on them in 2020 and released Fidenza the year after (a striking flow-field series that became a touchstone of generative art). But these early outputs did hint at landscape, coastline, roots. The substrate could cooperate with the wobble, even if the compositions themselves were pretty generic.

Meanwhile I'd been doing my own reading on computational creativity — how to facilitate aha moments, how to build tools that accelerate human-in-the-loop creativity. Not the REPLACE ALL HUMANS headline story about AI, but the one that actually gives me hope. Hiking helped me process. The first instantiation that felt like mine was the haiku loop.

The mechanics are simple. I vary the sketch's parameter ranges and the seed ten times, screenshot each, and hand the batch to an LLM to write a haiku.

a cartographer

draws the coast from memory

the map is better

The poems are cryptic, but that's part of the point. Next, I feed each image and the poem into a multimodal LLM that can "see" the image and evaluate the composition through the lens of the poem. The poem becomes a creative constraint — something to mutate the algorithm or parameter ranges toward, steering future optimization rounds. The system runs several rounds automatically. At any point I can step in, add critique, redirect. At the end I decide which mutations stick. In the future, farming out the evaluation step to a separate process with a cleaner context window would probably improve novelty and keep the evaluator from seeing the generator's thumb on the scale.

One example of the loop actually working. The first round of poems produced this:

old creek bed empties

stones remember where water

once chose to linger

The compositions at that point had plenty of convergence around the attractor points, but no counterbalancing emptiness — no dry beds, no place where nothing was. The poem named a missing quality the analytical framing hadn't reached. I added a repulsion zone. The next round's compositions had a place where nothing was.

Did the technique actually work? Honestly — more than I expected. Not every poem landed. A couple were more mood than instruction. But enough of them named missing qualities that the "it needs more density" framing would never have reached. The best poems didn't describe the problem. They described a way forward, abstractly, like Brian Eno and Peter Schmidt's Oblique Strategies, in a language the analytical mind wouldn't have gotten to on its own.

rain on the hillside

a thousand small decisions

all lead to the sea

Fitting for the subject matter. And for how the loop itself works — each round builds on the last, the poems accumulate into an aesthetic memory. Round 7 knew to stop adding features because Rounds 1 through 6 established the instrument's range.

The Library Is the Work

High on a small creative win, my research grew into a kind of meta study. In reviewing the LLM literature, nobody seemed to be doing this type of thing exactly. The closest formal cousin is what researchers call random-object injection (Mehrotra et al., 2024) — forcing an unrelated word like "cork" into a prompt to break associative ruts. But those papers treat random stimulus as an input to generation: "incorporate this into your output." The haiku loop uses it as a tool for evaluation. You generate first, then view through the lens of the poem. That's a way of seeing, not a way of making. None of the papers I reviewed framed creativity that way.

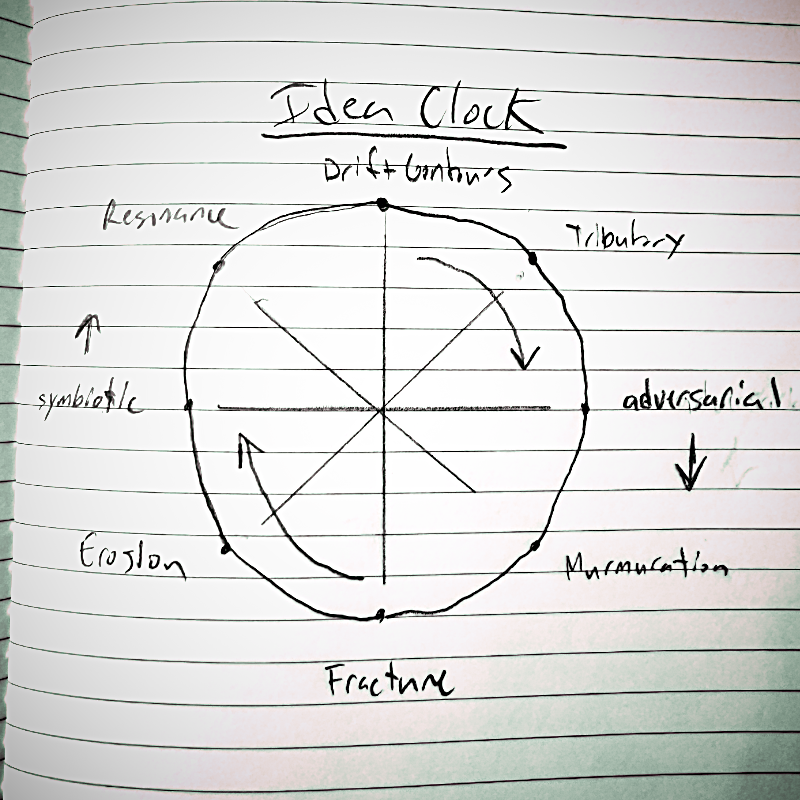

The next breakthrough was for building variations of an idea rather than refining one. I call it the idea clock. Place the base algorithm at 12 o'clock. At every other hour, create a variation. Two o'clock should be similar to twelve. Four o'clock should be similar to two, but proportionally more different from twelve. By six o'clock the algorithm should be maximally different from the original. As the hour hand swings back through eight and ten toward twelve, the variations converge again. The constraint is topological, not content-based. This sets up a different kind of creative pressure — not "generate a better version of the core idea" but "generate a connected family of related ideas, and the full set must close a loop."

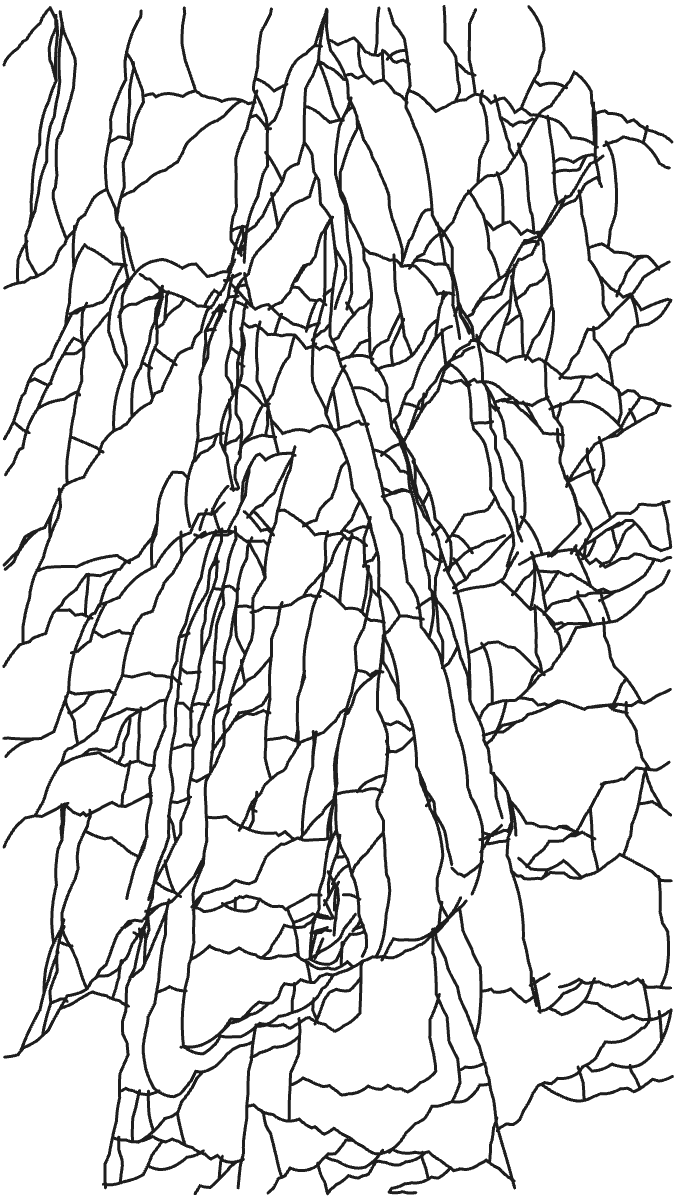

Here is the thing about how I used the clock. My prompt set only the structure — cyclic topology, proportional distance, return to origin. I didn't seed imagery. I didn't say "make six o'clock about bark," didn't say "fault lines," didn't say "work against the robot." What came back was an antipode at 6:00 called Fracture — an algorithm whose marks are straight segments meeting at acute angles, which is the one thing Line-us is worst at drawing. Every other position on the clock described a symbiotic or complementary relationship with the wobble. Fracture described an adversarial one. The library found the tension on its own.

And that adversarial relationship with the robot turned out to be the point. Lostritto poses a question early in Computational Drawing: what if the library of functions is the work? I built the clock as a system. That system is my library, and the library produced Fracture — a point in the creative space my analytical mind wouldn't have gotten to directly.

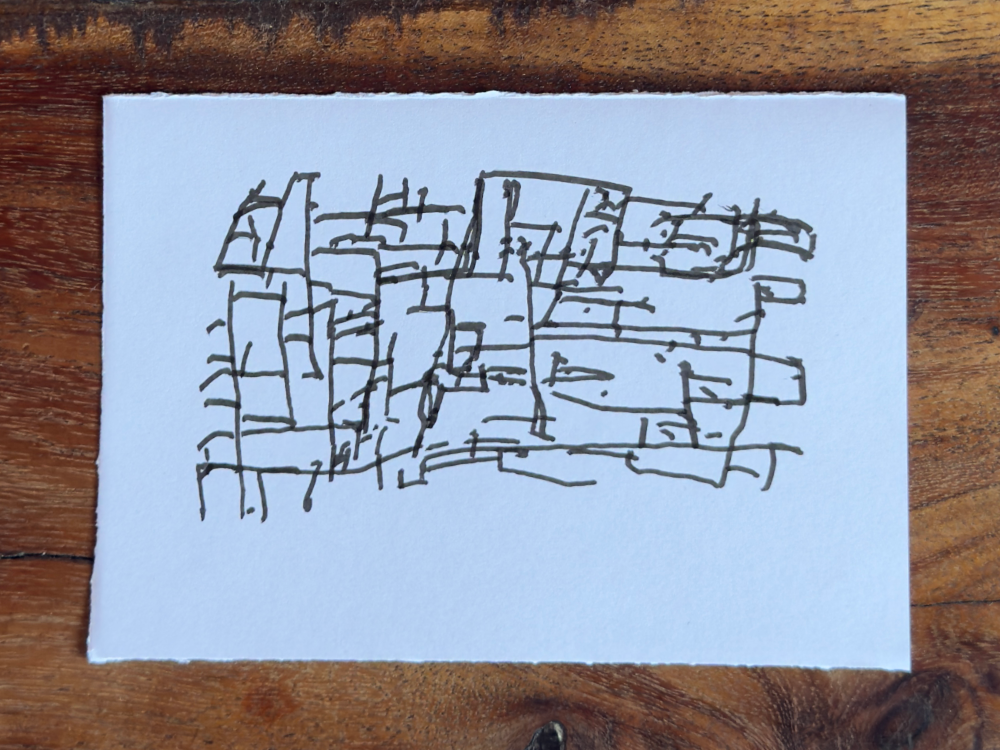

The Argument Is the Drawing

Line-us fights the algorithm at Fracture. Straight lines wobble. Sharp corners overshoot — the arm's momentum carries the pen past the vertex, leaving a hook at each junction. The geometric intent collides with mechanical reality in every mark. The marks on the paper end up looking like a geologist's field sketch — the kind of diagram drawn quickly with a shaking hand against a boulder, not the clean version from the textbook. The wobble doesn't enhance the intent. It argues with it. That argument, the visible negotiation between algorithm and arm, shapes what ends up on the page.

But where is the drawing? Lostritto asks a similar question and answers it in the negative: a drawing on screen is a model, not the drawing; the marks alone aren't the drawing; the drawing is never finished — it lives in a reading in the observer's mind. I take the interplay as given. But with an imperfect collaborator, the model can't be set aside. The adversarial relationship between model and robot is the drawing. Model, marks, perception: the drawing is the union. The conversation.

The clock is a kind of dance with this structure, adding an additional dimension of variation over time. Six positions on a circle, each a neighbor to two others and the antipode of one. No best, no direction of improvement. You can enter anywhere and traverse either way. Some positions cooperate with the arm. Fracture argues with it. Both are part of the dance. Doshi and Hauser (2024) name the trap — without that range, AI output homogenizes. The clock builds range in by design.

Multi-agent creativity research (EMNLP survey 2025) imagines collaboration as software talking to software. Line-us is something else — hardware with its own voice. The form factor presents certain constraints and certain opportunities: small, imprecise, portable. That's its character.

So what is the drawing? It's what happens when model, marks, and perception meet and negotiate. The argument is the drawing. Hybridity.

Closing the Loop

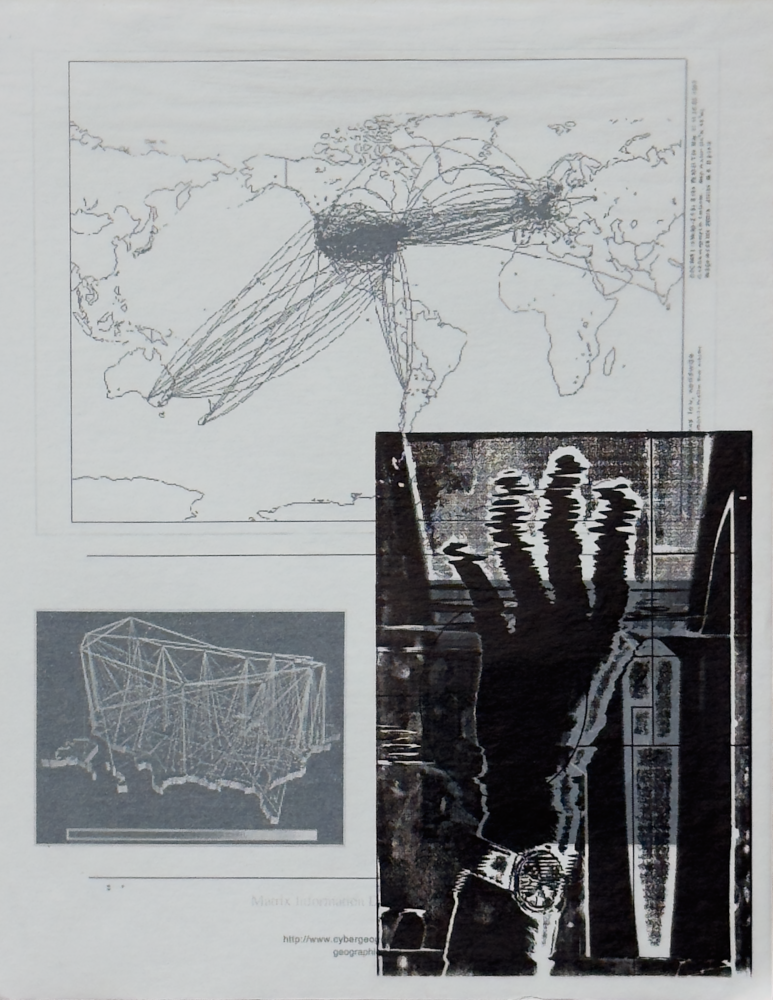

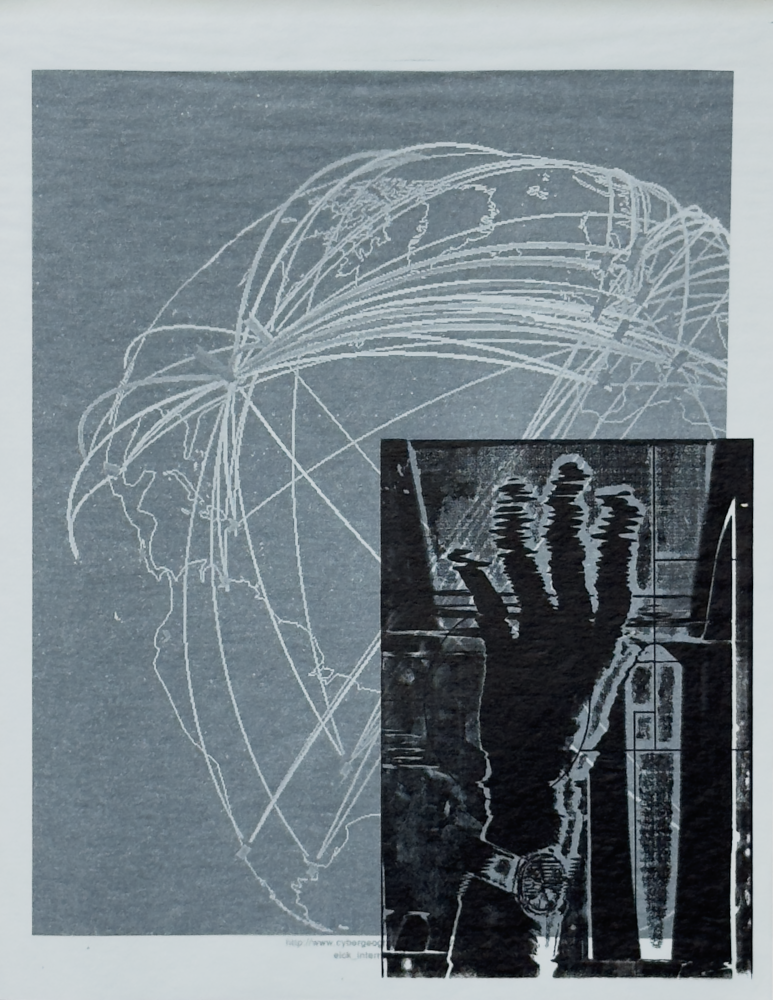

In a college printmaking course, I scanned my hands and moved them around inside the scanner to distort the image. Then edited in Photoshop, printed to an overhead transparency, applied to a photopolymer plate, inked and printed on a press, used a computer printer to realize more found images, and collaged them all together.

That hybrid loop, moving in and out of the digital and the physical. That held my interest. Each platform in isolation just wasn't enough. What compelled me was the collaboration across disciplines, each tool's voice. I think back to Marshall McLuhan's the medium is the message. Every tool in these loops — scanner, press, system, algorithm, wobbly arm — shapes what can be said with it. Each leaves its fingerprint. The loop is where they dialogue.

I'm still doing this, almost 30 years later. Making sense of how I relate to the world around me and what part the tools play. Asking "What's the message?" With tools this capable, the answer matters more than ever.

old roots underground

they grip what they cannot see

and still the tree grows

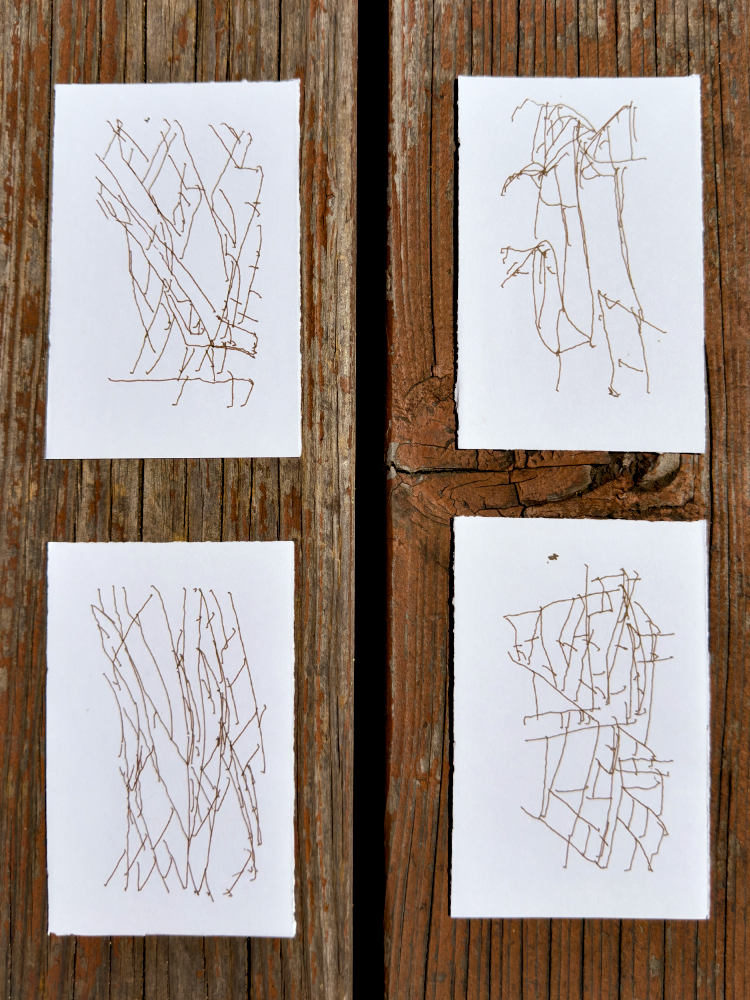

Four of the six clock positions are still not built out and remain undrawn. Erosion is the most miraculously aligned with imagery that surrounded me on the trip. Fully unprompted. Fully emergent.

I came to this retreat to find what would work with Line-us. The woods inspired. Research on creativity gave me context. I built techniques I think are novel and useful: the haiku loop and the idea clock. I think the drawings work — they cooperate with the wobble, and they finish in the eye. Along the way, I strengthened my understanding of what a drawing is and is not.

I've already taken the loop further and combined some of these drawings with my other print work after returning. Old roots, new branches. Same tree.

— Chris De Giere, Soquel, CA. Spring 2026